Why Your Team’s AI Problem Is Actually a Writing Problem

The training lasted three days. By most measures, it went well. A room full of intelligent professionals worked through the demos, asked good questions, and left with a clearer picture of what AI tools could do. But when participants sat down in the weeks that followed to use the tools on actual work tasks, the outputs were vague. The suggestions were generic. The drafts needed so much revision that it often seemed faster to just write the thing from scratch.

This pattern has a recognizable shape. It’s the same one writing instructors see when students who already passed English move into upper-division coursework and their communication gaps resurface the moment the scaffolding drops. AI training follows the same curve. The tools get learned, but the confidence doesn’t follow. And the gap between the two usually isn’t a technology problem—it’s the same communication gap that was already there. It’s a writing problem.

The Hidden Skill Gap

When organizations invest in AI tools, they tend to frame the challenge as a technical one. The questions that come up first are usually familiar:

Which platform should we use?

How do we integrate it with our existing systems?

What’s the security policy?

These are real questions. But they’re downstream of a more fundamental issue: the quality of what you put into an AI system is determined almost entirely by your ability to communicate clearly in writing.

The professionals who struggle most are often the ones who haven’t fully worked out who they’re communicating to or what the output needs to accomplish. In writing instruction, these are the foundational questions: audience and purpose. Every document, every email, every slide deck has both. When either one is fuzzy, the output suffers—regardless of the writer’s intelligence or effort. AI amplifies this. A vague sense of audience produces a prompt that could mean almost anything, and an AI trained on almost everything will give you something that fits almost nowhere.

The same failure shows up across formats: a PowerPoint with no clear argument, a data visualization that displays information without communicating it, a summary that covers the content but misses the point. These aren’t tool failures. They’re audience-and-purpose failures the tool has no way to compensate for.

Prompts Are Writing

Writing a good prompt is writing a good instruction. The technical mystique around “prompt engineering” can obscure something much simpler: the same skills that make any professional writing effective are the ones that make AI prompts work:

Clarity of purpose — knowing what you actually want the output to accomplish

Audience awareness — specifying who the output is for and what they need

Precision of language — giving enough context that nothing important is assumed

The willingness to revise — treating the first output as a draft, not a verdict

A useful diagnostic: ask someone on your team to write a prompt for a task they do regularly, then read it back as if they were a new employee receiving it as instructions. Almost without fail, something breaks down. Context is assumed. The audience is unnamed. Tone is unspecified. Scope is unclear. These problems existed long before AI—in emails that required three follow-ups, in project briefs everyone interpreted differently. AI just surfaces them faster.

The Revision Loop

Good writers don’t write in a straight line. The process is recursive–draft, re-read, revise, reconsider, repeat. Using AI effectively works the same way. The professionals who get lasting value from these tools treat AI outputs the way a good writer treats a first draft: useful as a starting point, worth engaging with critically, and improvable with specific feedback. In practice, that looks like:

Working from real tasks, so the revision cycle is grounded in actual stakes

Reading outputs critically: noticing when something sounds right but is vague, or when a summary has dropped important nuance

Treating the prompt as a draft and the revision as the actual work

Knowing what AI does well: reorganization, generating options, accelerating early drafts: and where human judgment can’t be outsourced

The participants in that three-day training who made the most progress weren’t the ones with the strongest technical backgrounds. They were the ones who already knew how to read something carefully and push back on it. That’s a writing instinct—the same one those writing instructors are still trying to develop in students who “already took English.” The tool is different. The skill gap is the same.

The Underlying Question

Before rolling out an AI tool, the most useful question isn’t “Which platform should we use?” It’s: “How well does our team already communicate in writing?” Not as a gotcha—most teams have real strengths and specific gaps—but as a diagnostic. The teams that get lasting value from AI are almost always the ones who have thought carefully about audience, purpose, and what their documents are actually trying to do.

AI doesn’t fix unclear thinking. It reflects it back faster. The platform matters less than you think. The writing skills matter more than most trainers will tell you. And the participants who walked out of that three-day training with real capability weren’t the ones who learned the most about the tools—they were the ones who left with a clearer sense of how to say what they meant, and a dawning recognition that this had always been the harder problem.

Has your team run into this pattern—where the real bottleneck in AI adoption turned out to be something other than the technology itself? I’d be curious what that looked like in practice.

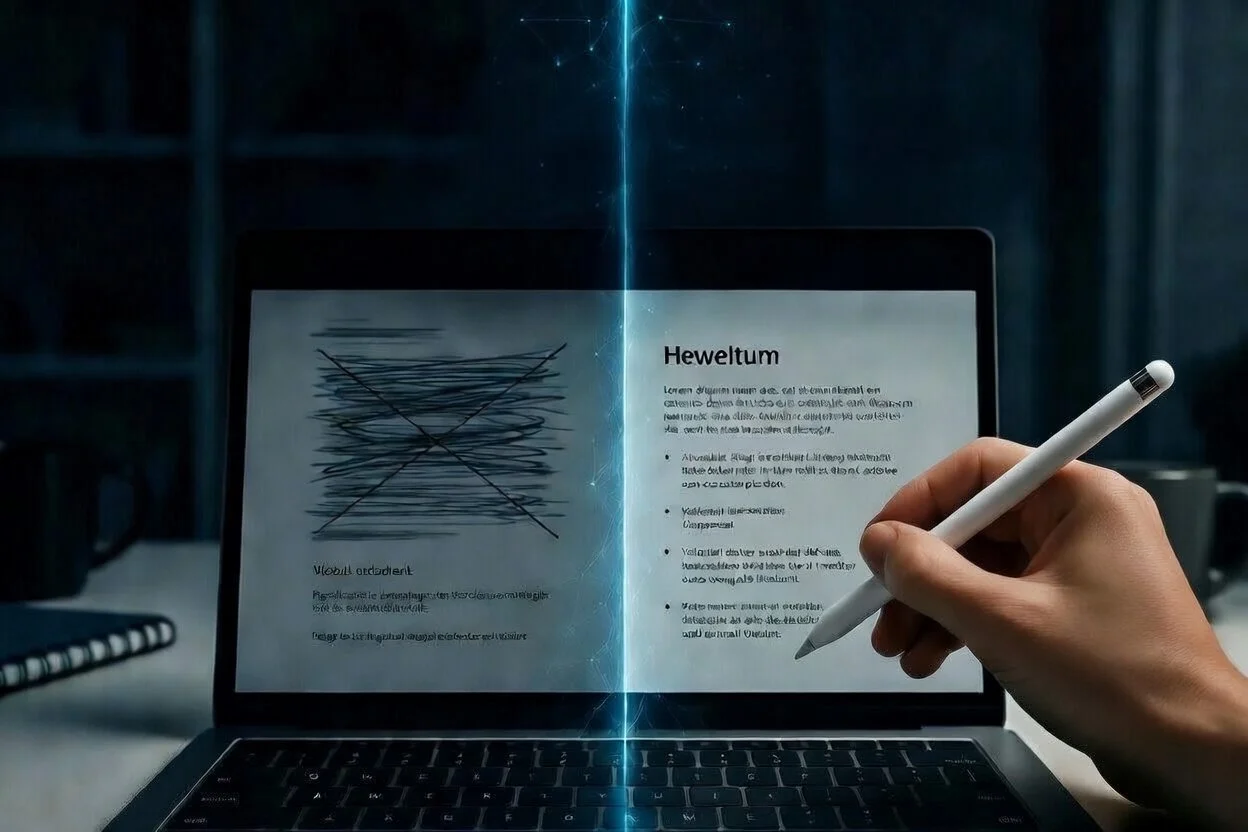

This post was drafted and revised using Claude as a writing tool. The ideas, structure, and voice are mine—the AI handled the keystrokes. Image generated with Grok.